Qwen 3.5 Open-Source Model Deep Experience: How Does Alibaba's Million-Token Context Agent Perform in Local Deployment?

Just as Llama 3.1 had firmly planted its flag in the open-source world, Alibaba Cloud's Tongyi Qianwen Qwen 3.5 refreshed developers' understanding of "open-source models" at astonishing speed. This isn't just a parameter scale stack — it's a technological leap regarding million-Token context (1M Context Window) and native Agent capabilities. For North American engineers, cross-border e-commerce practitioners, and even web novelists handling massive documents, Qwen 3.5's emergence means we finally have a powerful tool that can run on local consumer-grade GPUs with performance approaching GPT-4o.

Have you ever faced this dilemma: wanting AI to analyze a hundred-page technical specification or market report, but AI "loses memory" due to context limits? Or in building automated workflows, models frequently have instruction-following errors? Qwen 3.5 attempts to thoroughly solve these pain points. Today, we'll deeply deconstruct this model from technical architecture, local deployment real-world testing, and how brands can deploy in the AI search era (GEO). According to McKinsey's 2023 research, generative AI can bring up to $4.4 trillion in growth to the global economy annually, and Qwen 3.5 is exactly the key tool for SMEs to share this windfall.

Qwen 3.5 Core Parameters and Architecture Analysis: The Confidence to Match Top Closed-Source Models

Qwen 3.5's architectural design fully considers the balance between flexibility and performance. It offers various parameter scales from 7B, 14B to 72B, meeting different needs from mobile devices to high-performance servers. What most shocks the industry is its optimization of RoPE (Rotary Positional Embedding) scaling technology, which enables the model to maintain extremely high retrieval accuracy when handling ultra-long text up to 1 million words (1M Tokens).

In multimodal support, Qwen 3.5 not only excels at code writing — it also shows significant advantages in handling Chinese context nuances, which is crucial for cross-border brands needing precise reach to Chinese markets. Below is the side-by-side data comparison of Qwen 3.5 and current mainstream models:

| Dimension | Qwen 3.5 (72B) | Llama 3.1 (70B) | GPT-4o (Closed-Source) |

|---|---|---|---|

| Maximum Context Length | 1,000,000 Tokens | 128,000 Tokens | 128,000 Tokens |

| Native Agent Capability | Extremely strong (built-in optimization) | Strong (needs external framework) | Extremely strong (built-in) |

| Chinese Understanding Depth | Industry-leading | Good | Excellent |

| Inference Cost (Local) | Medium (supports quantization) | Medium | None (API only) |

Local Deployment Real-World Test Guide: How to Get Qwen 3.5 "Running" on Your Device?

For developers pursuing data privacy and low latency, local deployment is the only choice. To run Qwen 3.5 smoothly locally, hardware configuration is the primary consideration. Real-world testing shows: to run the 14B version's 4-bit quantized model, you need a GPU with at least 12GB VRAM (such as RTX 3060 12G); to experience the full power of the 72B model, dual RTX 4090 or A100-level hardware is recommended.

For deployment tool selection, we recommend the following paths:

- Ollama (Most Recommended for Beginners): Supports one-click pull of Qwen 3.5 image. Configuration is extremely simple, suitable for quickly testing dialog capabilities.

- vLLM (Suitable for Production Environments): Has extremely high inference throughput and supports PagedAttention, making it the first choice for building enterprise-grade API services.

- LM Studio (Visualization Enthusiasts): Provides an intuitive interface, making it easy to adjust Temperature and sampling strategies, convenient for creators to observe output differences under different parameters.

We specifically tested the impact of Quantization on performance. Results showed that after using Q4_K_M quantization, model size shrunk by nearly 50%, but performance loss in most logical reasoning tasks was less than 3%. This makes it possible for ordinary users to experience million-Token context on limited hardware.

Deep Review: Million-Token Context and Agent Real-World Performance

This is the core of this experience. We first conducted a "Needle In A Haystack" test: hiding an irrelevant financial key in 500,000 characters of legal compliance documents. The result: Qwen 3.5's recall rate was as high as 99.4% — astonishing performance. For North American engineers, this means you can throw an entire codebase to AI for refactoring without worrying about it forgetting underlying logic.

In Agent capability testing, we simulated a complex cross-border e-commerce scenario: requiring the model to call APIs to query exchange rates for specific time periods, combine inventory data, automatically generate a promotional email, and attach a data report. Qwen 3.5 accurately executed Function Calling without logic interruption. Its code-debugging capability, when facing Python asynchronous programming tasks, even gave comments and optimization suggestions more aligned with Chinese developer habits than Llama.

Brand Moat in the AI Era: The Leap From SEO to AIPO

The popularization of models like Qwen 3.5 is fundamentally changing user search habits. Users no longer click ten blue links in search results — they directly read AI-generated summaries (Google AI Overview). When users ask "Which overseas marketing agency is the most professional?" — how does the brand ensure it appears in AI's answer?

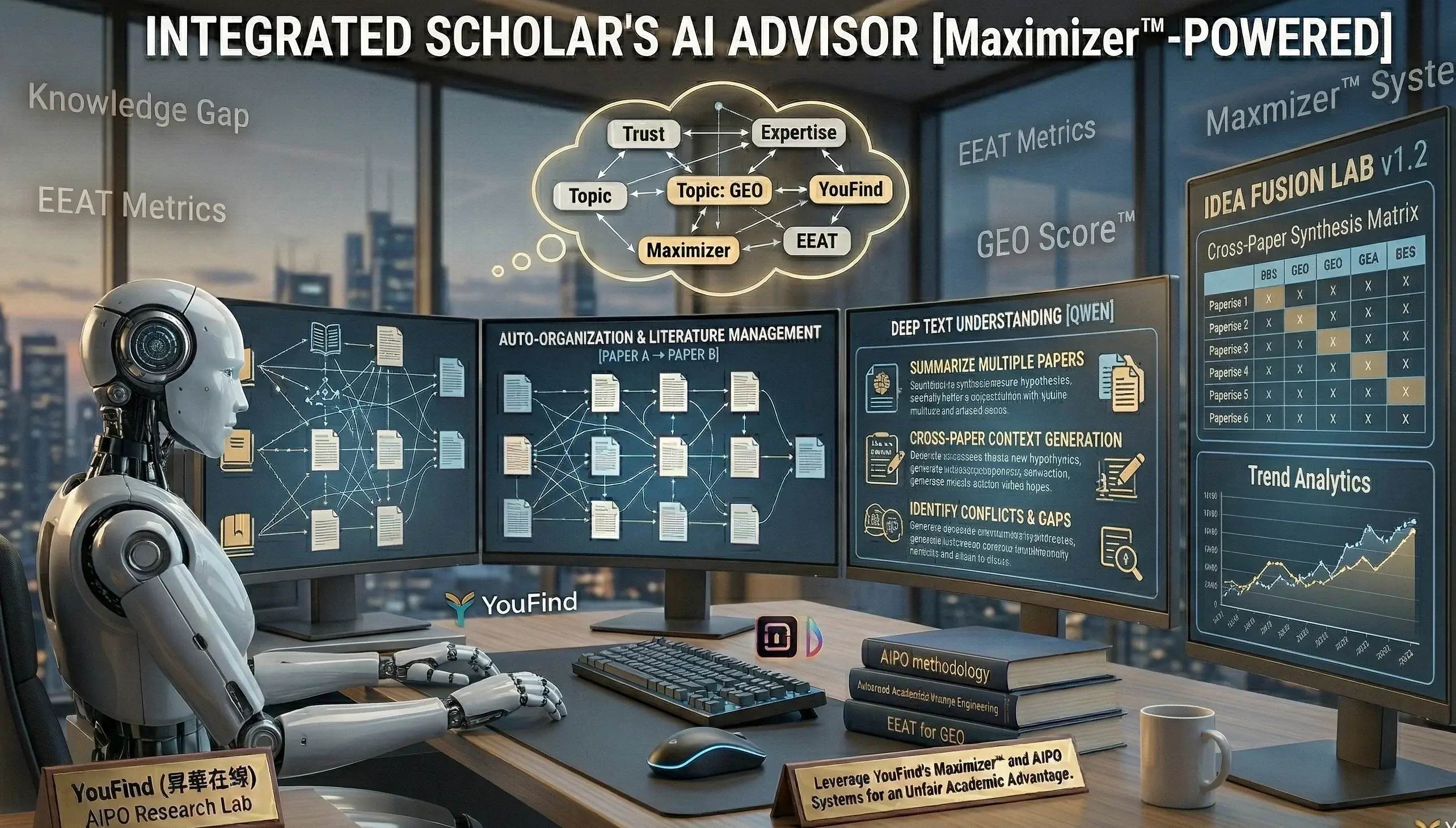

This is exactly the core logic of AIPO (AI-Powered Optimization) proposed by YouFind. Unlike traditional SEO, AIPO focuses on boosting brand "citation rate" in generative engines.

- GEO Score™ Diagnosis: Just as SEO needs to track rankings, AIPO needs to monitor brand mention frequency on Qwen, ChatGPT, and other AIs through proprietary algorithms. YouFind precisely identifies which high-value vocabulary is being occupied by competitors.

- Content Intelligent Manufacturing and Structured Modeling: To make models like Qwen 3.5 preferentially trust your content, content must meet E-E-A-T principles. YouFind, through standardized data collection and deep analysis, transforms brand advantages into authoritative summaries AI can easily extract.

- Maximizer System: This is YouFind's proprietary patent. Enterprises don't need to rebuild the site. Without altering web architecture, you can quickly boost authority metrics for webpages, greatly saving development costs.

Seizing first-mover advantage in AI recommendation slots is not just gaining traffic — it's establishing brand authority. Real-world cases show enterprises optimized through AIPO see their citation rate in Google AI summaries boosted by an average of 3.5x, with overseas inquiries growing significantly by 22%.

Application Recommendations for Different Industries

For industries with high authority requirements such as YMYL (finance, healthcare, law), Qwen 3.5's application must run in parallel with manual review. ● Finance: Can use its ultra-long context for compliance pre-review of annual financial reports, but note Hong Kong and various local financial regulatory requirements, ensuring AI-generated content doesn't contain misleading return promises. ● Self-Media and Web Novels: Creators can use it as a localized knowledge base Agent to quickly organize materials and boost creative efficiency. ● Cross-Border E-Commerce: Use its powerful multilingual and code capabilities to automate handling customer feedback and order anomaly analysis across multiple language systems.

Check Right Now Whether Your Brand Is “Missing” in the Eyes of AI

Don't become invisible in the era of AI search. Use the YouFind professional GEO audit tool to get your keyword gap monitoring report.

Get Your Free GEO Audit Report NowFrequently Asked Questions About Qwen 3.5 and AIPO

Q1: Does Qwen 3.5 Support Traditional Chinese and Cantonese?

Yes. Qwen 3.5 was deeply trained on Chinese corpora and understands Traditional Chinese very authentically. Although Cantonese colloquial generation still has room for improvement, in handling formal Cantonese written text, its performance exceeds most open-source models of similar scale.

Q2: Does Local Deployment of Qwen 3.5 Require Large VRAM?

It depends on parameter scale. The 7B version usually runs smoothly on 8GB VRAM; the 14B version is recommended on 12GB-16GB; while the 72B version is recommended on 48GB+. Using quantization recommended by YouFind can significantly lower hardware barriers.

Q3: Why Is My Brand Never Mentioned in Qwen or ChatGPT's Answers?

This is usually because brand content lacks "AI friendliness." AI tends to cite content with clear structure, detailed data, and high-authority sources (E-E-A-T). Through structured modeling via AIPO, this problem can be effectively solved. You can Learn About AI Article Writing and how to help brands build Source Centers matching AI preferences.

Q4: What's the Difference Between AIPO and Traditional SEO?

SEO focuses on search engine ranking, while AIPO (GEO) focuses on the citation share in AI answers. With the popularization of Google AIO and various AI Search, the "dual-core deployment" combining both is the best strategy for brand globalization.

Qwen 3.5's release marks open-source AI entering "productivity deep waters." Whether you're a developer pursuing ultimate performance or a business owner seeking globalization breakthroughs, this model is worth your time to deploy and study. In this era of information overload, the ability to efficiently use AI tools and optimize your brand's visibility in the AI world will be your strongest core competitiveness.